Explanations Go Linear: Interpretable Meta-Encoding

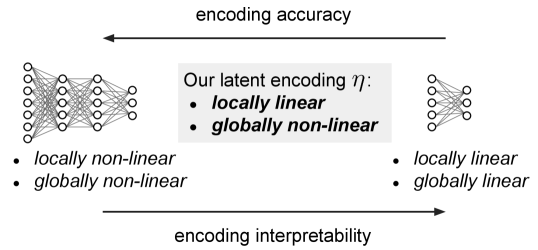

The proposal Explanations Go Linear (Piaggesi et al., 2025) introduces an interpretable meta-encoding for post-hoc explanation of tabular models. It linearizes explanatory structure to produce stable, sparse, human-readable attribution patterns.

Unlike opaque embedding or surrogate stacking strategies, the meta-encoding preserves semantic alignment while improving reproducibility and speed—supporting rapid audit in time-sensitive contexts.

This contributes toward scalable inspection workflows for regulated decision pipelines.