Local to global

1

LINE

From the experience of the surveys [ GMR2018 , BGG2021 ], we developed and designed various explanation methods with a focus on local rule-based explainers. Also, our goal was to “merge” such local explanations to reach a global consensus on the reasons for the decisions taken by an AI decision support system.

1.1 Local Rule-based Explainer

Our first proposal is the LOcal Rule-based Explainer (LORE) presented in [GMG2019]. LORE is a model-agnostic local explanation method that returns as an explanation a “factual rule revealing the reasons for a decision, and a set of counterfactual rules illustrating how to change the classification outcome. The first peculiarity of LORE is that it adopts a synthetic generator based on a genetic algorithm. The second peculiarity of LORE is that it adopts a decision tree as a local surrogate, thus (i) decision rules can naturally be derived from a root-leaf path in a decision tree; and, (ii) counterfactuals can be extracted by symbolic reasoning over the tree.1.2 Plausible Data-Agnostic Local Explanations

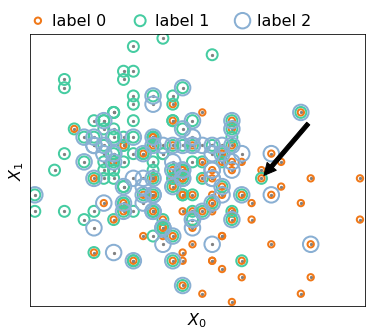

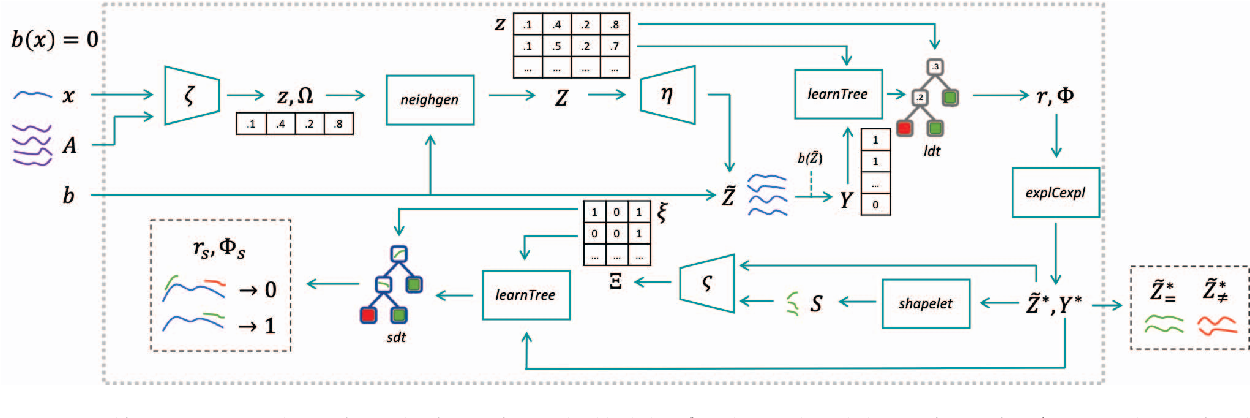

LORE was initially designed to deal with tabular data and binary classification problems. We extended it to work on other data types and for multiclass problems. Also, we are currently working to solve some limitations of LORE related to the stability and actionability of the explanations. In line with LIME [1], our idea was to extend LORE for working on any data type. Indeed, our objective was to define a local model-agnostic and data-agnostic explanation framework to explain the decisions taken by obscure black-box classifiers on specific input instances. Therefore, our proposal would not be tied to a specific type of data or a specific type of classifier. Besides being model-agnostic, LIME is also data-agnostic. However, LIME employs conceptually different neighborhood generation strategies for tabular data, images, and texts. For images, LIME randomly replaces actual super-pixels with super-pixels containing a fixed color. For texts, it randomly removes words. Thus, both for images and text LIME “suppresses” parts of the actual information in the data. On the other hand, for tabular data, LIME assumes uniform distributions for categorical attributes and normal distributions for the continuous ones. Such limitations prevent LIME from basing the local regressor used to extract the explanation on meaningful synthetic instances. Our proposal allows overcoming these limitations by guaranteeing comparable synthetic data generation among all the different data types, ensuring meaningful synthetic instances to learn interpretable local surrogate models. Our idea was to extend LORE [GMG2019] to overcome the limitations of existing approaches by exploiting the latent feature space learned through different types of autoencoders [2] to generate plausible synthetic instances during the neighborhood generation process. Given an instance of any type classified by a black-box, the Latent-LORE (LLORE) allows instantiating a data-specific explainer following the explanation framework structure. The explainer will be able to return a meaningful explanation for the classification reasons. LLORE-based approaches work as follows. First, they generate synthetic instances in the latent feature space using a pre-trained autoencoder (GAM, AAE, VAE, etc.). Then, they learn a latent decision tree classifier. After that, they select and decode the synthetic instances respecting the latent local decision rules observed on the decision tree. Finally, independently from the data type, they return an explanation that always consists of a set of synthetic exemplars and counter-exemplars instances illustrating, respectively, instances classified with the same label and with a different label than the instance to explain, which may be visually analyzed to understand the reasons for the classification. Additionally, a data-specific explanation can be built on the exemplars and counter-exemplars. We instantiated LLORE for images [GMG2019, GMM2020], time series [GMS2020] and text [LGR2020] realizing ad-hoc logic-based explanations. A wide experimentation on datasets of different types and explaining different black-box classifiers empirically demonstrate that LLORE-based explainers overtakes existing explanation methods providing meaningful, stable, useful, and really understandable explanations. In [MGY2021, MBG2021] we employed ABELE in a case study for skin lesion diagnosis, illustrating how it is possible to provide the practitioner with explanations on the decisions of a Deep Neural Network (DNN). We have proved that after being customized and carefully trained, ABELE can produce meaningful explanations that really help practitioners. The latent space analysis suggests an interesting partitioning of images over the latent space. Still in [MGY2021, MBG2021] is reported a survey involving real experts in the health domain and common people that supports the hypothesis that explanation methods without a consistent validation are not useful. As highlighted by these works, the context of synthetic data generation for local explanation methods it is important to generate data samples located within “local” areas surrounding specific instances. The problem with generative adversarial networks and autoencoders is that they require a large quantity of data, and a not negligible training time. In addition, such generative approaches are suited only for particular types of data. In [GM2020] we overcome these drawbacks proposing DAG, a Data-Agnostic neighborhood Generation approach that, given an input instance and a (small) support set, returns a set of local realistic synthetic instances. DAG applies a data transformation that enables the generation for any type of input data. It is based on a set of generative operators inspired to genetic programming. Such operators work by applying specific vector perturbations by following a fast procedure that only requires a small set of instances to support the data generation. A wide experimentation on different types of data (tabular data, images, time series, and texts) and against state-of-the-art local neighborhood generators shows the effectiveness of DAG in producing realistic instances independently from the nature of the data.1.3 Local Explanation 4 Health

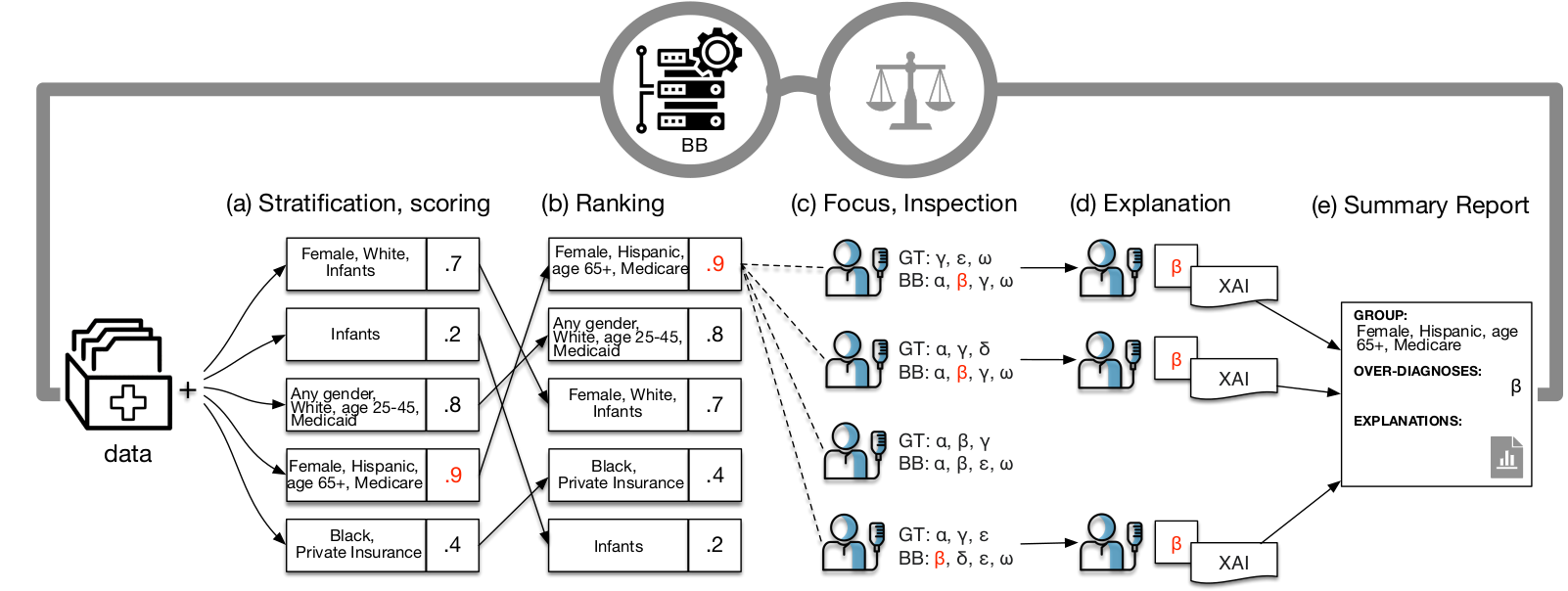

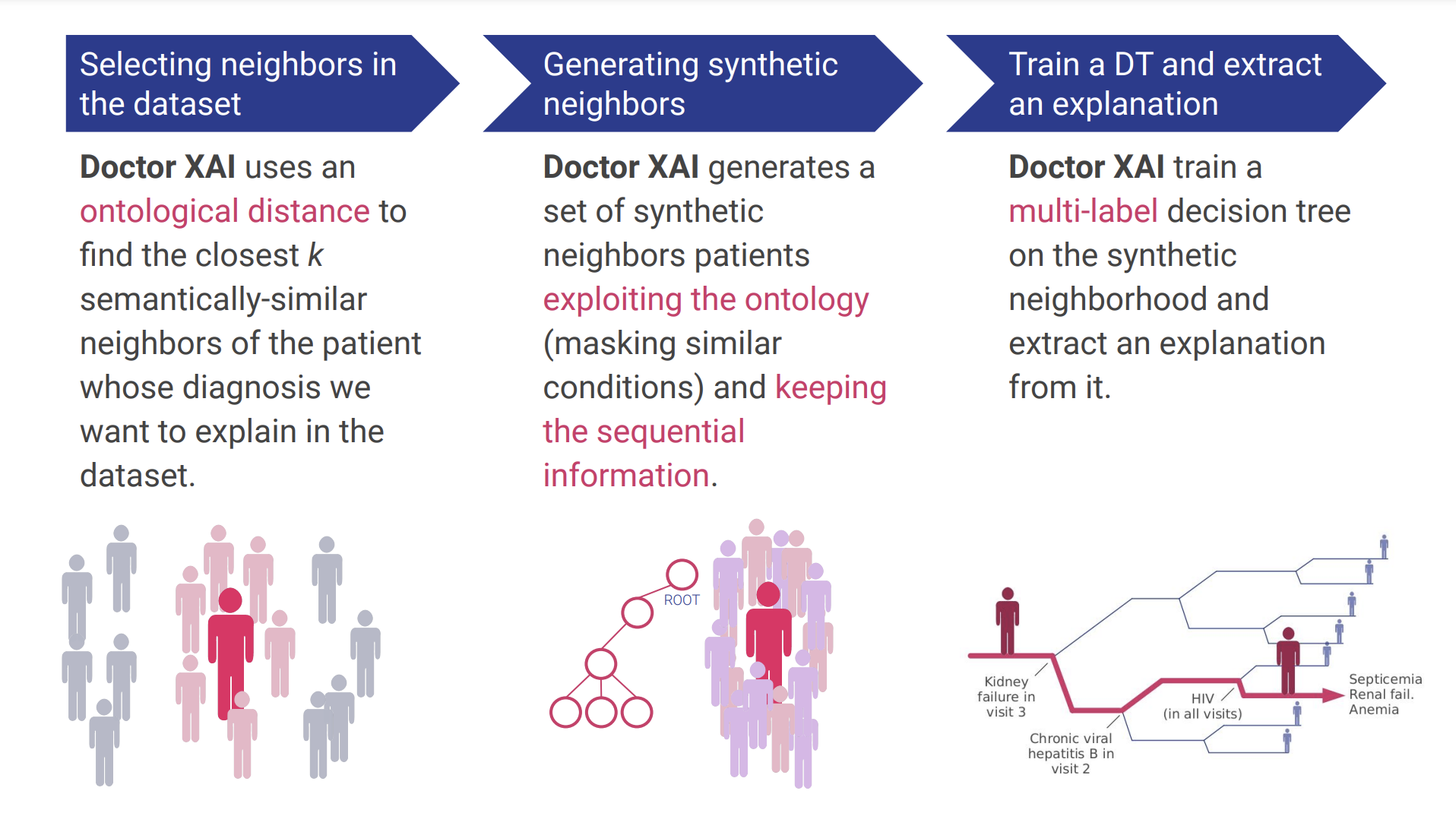

In order to enable explainable AI systems to support medical decision-making, it is necessary to enable XAI techniques to deal with typical healthcare data characteristics. We incrementally addressed such a problem with the contributions presented in [PGM2019] and [PPP2020]. [PGM2019] presents MARLENA (Multi-lAbel RuLe-based ExplaNAtions), a model-agnostic XAI methodology to address the outcome explanation problem in the context of multi-label black box outcomes. Building on the insights we gained from the experiments carried out in [PGM2019], we developed Doctor XAI [PPP2020], a model-agnostic technique that is suitable for multi-label black box outcomes and it is also able to deal with ontologically-linked and sequential data. Two key aspects of the presented approach are that it exploited the ontology in creating the synthetic neighborhood and employed a novel encoder/decoder scheme for sequential data that preserves the interpretability of the features. The ontological perturbation allows us to create synthetic instances that consider local features interactions by perturbing the set of neighbors available in the dataset masking semantically similar features. We tested Doctor XAI in two scenarios. First, we tested the ability of Doctor XAI combined with a local-to-global approach to audit a fictional commercial black box. This resulted in a framework for auditing clinical decision support systems called FairLens [PPB2021]. FairLens first stratifies the available patient data according to demographic attributes such as age, ethnicity, gender and healthcare insurance; it then assesses the model performance on such groups highlighting the most common misclassifications. Finally, FairLens allows the expert to examine one misclassification of interest by exploiting DoctorXAI to explain which elements of the affected patients' clinical history drive the model error in the problematic group. We validate FairLens' ability to highlight bias in multilabel clinical DSSs introducing a multilabel-appropriate metric of disparity and proving its efficacy against other standard metrics. Finally in [PBF2022], we presented the collective effort of our interdisciplinary team of data scientists, human-computer interaction experts and designers to develop a human-centered, explainable AI system for clinical decision support. Using an iterative design approach that involves healthcare providers as end-users, we present the first cycle of the prototyping-testing-redesigning of DoctorXAI and its explanation-user interface. We first present the DoctorXAI concept that stems from patients data and healthcare application requirements. Then we develop the initial prototype of the explanation user interface, and perform a user study to test its perceived trustworthiness and collect healthcare providers' feedback. We finally exploit the users' feedback to co-design a more human-centered XAI user interface taking into account design principles such as progressive disclosure of information.1.4 Local to Global Approaches

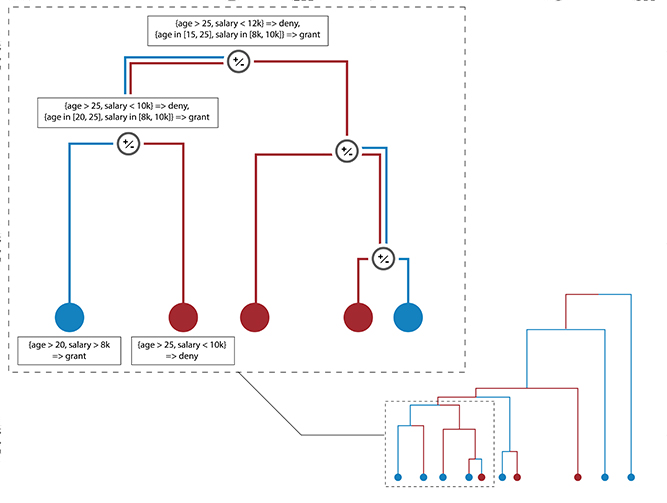

Local explanations enjoy several properties: they are relatively fast and easy to extract, precise, and possibly diverse. Conversely, global explanations are more cumbersome to extract, and, having a larger scope, more general. Thus, these two families present complementary properties. The Local to Global explanation paradigm [PGG2018, PGG2019] is a natural extension of the Local and Global paradigms, and aims to exploit the fidelity and ease of extraction of Local explanations to generate faithful, general, and simple Global explanations. In our work, we have focused on explanations in the form of axis-parallel decision rules, and have proposed two algorithms to tackle them, namely Rule Relevance Score (RRS) [SGM2019] and GLocalX [SGM2021]. Rule Relevance Score (RRS) [SGM2019] is a simple scoring framework in which we try to select, rather than edit, the local explanations. In other words, with RRS we construct global explanations by selecting local ones. RRS uses a multi-faceted scoring formula in which explanations are ranked according to their fidelity, coverage and outlier coverage, which rewards rules explaining seldomly explained records. GLocalX [SGM2021] relies on three assumptions: (i) logical explainability, that is, explanations are best provided in a logical form that can be reasoned upon; (ii) local explainability, that is, regardless of the complexity of the decision boundary of the black box, locally it can be accurately approximated by an explanation; (iii) composability, that is, we can compose local explanations by leveraging their logical form. Starting from a set of local explanations in the form of decision rules constituting the structure's leaves, GLocalX iteratively merges explanations in a bottom-up fashion to create a hierarchical merge structure that yields global explanations on its top layer. GLocalX shows a unique balance between fidelity and simplicity, having state-of-the-art fidelity and yielding small sets of compact global explanations. When comparing with natively global models, such as Decision Trees and CPAR, who have direct access to the whole training data, rather than the local explanations, GLocalX compares favorably. It’s only slightly less faithful than the most faithful model (~2% less faithful than a Decision Tree) while having a far simpler model (up to 1 order of magnitude smaller set of output rules). When compared with models of similar complexity, such as a Pruned Decision Tree, GLocalX is slightly more faithful and less complex.1.5 Towards Interpretable-by-design Models

In parallel with the activity of designing local and global post-hoc explainers, in line with [3] we also started to explore directions for designing predictive models which are interpretable-by-design, i.e., they return the prediction and allow us to understand the reasons that lead to that prediction. Indeed, if the machine logic is transparent and accessible, as humans, we tend to trust more a decision process using a logic similar to that of a human being, rather than a reasoning that we can understand but that is outside the human way of thinking [4]. In [GD2021] we present MAPIC a MAtrix Profile-based Interpretable time series Classifier. MAPIC is an interpretable model for time series classification able to guarantee high levels of accuracy and efficiency while maintaining the classification, and the classification model, interpretable. In the design of MAPIC we followed the line of research based on shapelets. However, we replaced the inefficient approaches adopted in the state of the art for the search of the most discriminative subsequences with the patterns that is possible to extract from a model named Matrix Profile [5]. In short, the Matrix Profile (MP) represents the distances between all subsequences and their nearest neighbors. From a MP it is possible to efficiently extract some patterns characterizing a time series such as motifs and discords. Motifs are subsequences of a time series which are very similar to each other, while discords are subsequences of a time series which are very different from any other subsequence. As a classification model, MAPIC adopts a decision tree classifier due to its intrinsic interpretability. We empirically demonstrate that MAPIC overtakes existing approaches having a similar interpretability in terms of both accuracy and running time. For these two last results and from GLocalX it is clear the importance of relying on sound decision tree models. A weak point of traditional decision trees is that they are not very stable and a common procedure to stabilize them is to merge various trees into an unique tree. In a certain sense, this is a form of explanation of a set of decision trees with a single model. Several proposals are present in the literature for traditional decision trees but there is a lack of merging operations for oblique trees and forests of oblique trees. Thus, in [BGM2021] we combine XAI and the merging of decision trees. Given any accurate and complex tree-based classifier, our aim is to approximate it with a single interpretable decision tree that guarantees comparable levels of accuracy and a low complexity that permits us to understand the logic it follows for the classification. We propose a Single-tree Approximation MEthod (SAME) that exploits a procedure for merging decision trees, a post-hoc explanation strategy, and a combination of them to turn any tree-based classifier into a single and interpretable decision tree. Given a certain tree-based classifier, the idea of SAME is to reduce any approximation problem with another one for which a solution is known in a sort of “cascade of approximations” with several available alternatives. This allows SAME to turn Random Forests, Oblique Trees and Oblique Forests into a single decision tree. The implementation of SAME required adapting existing procedures for merging traditional decision trees to oblique trees by moving from an intensional approach to an extensional one for efficiency reasons. An experimentation on eight tabular datasets with different size and dimensionality compares SAME against a baseline approach (PHDT) that directly approximates any classifier with a decision tree. We show that SAME is efficient and that the retrieved single decision tree is at least as accurate as the original non interpretable tree-based model.Publications

1.

[GMR2018]Guidotti Riccardo, Monreale Anna, Ruggieri Salvatore, Turini Franco, Giannotti Fosca, Pedreschi Dino (2022) - ACM Computing Surveys. In ACM computing surveys (CSUR), 51(5), 1-42.

Abstract

In recent years, many accurate decision support systems have been constructed as black boxes, that is as systems that hide their internal logic to the user. This lack of explanation constitutes both a practical and an ethical issue. The literature reports many approaches aimed at overcoming this crucial weakness, sometimes at the cost of sacrificing accuracy for interpretability. The applications in which black box decision systems can be used are various, and each approach is typically developed to provide a solution for a specific problem and, as a consequence, it explicitly or implicitly delineates its own definition of interpretability and explanation. The aim of this article is to provide a classification of the main problems addressed in the literature with respect to the notion of explanation and the type of black box system. Given a problem definition, a black box type, and a desired explanation, this survey should help the researcher to find the proposals more useful for his own work. The proposed classification of approaches to open black box models should also be useful for putting the many research open questions in perspective.

2.

[GMG2019]

Guidotti Riccardo, Monreale Anna, Giannotti Fosca, Pedreschi Dino, Ruggieri Salvatore, Turini Franco (2021) - IEEE Intelligent Systems. In IEEE Intelligent Systems

Abstract

The rise of sophisticated machine learning models has brought accurate but obscure decision systems, which hide their logic, thus undermining transparency, trust, and the adoption of artificial intelligence (AI) in socially sensitive and safety-critical contexts. We introduce a local rule-based explanation method, providing faithful explanations of the decision made by a black box classifier on a specific instance. The proposed method first learns an interpretable, local classifier on a synthetic neighborhood of the instance under investigation, generated by a genetic algorithm. Then, it derives from the interpretable classifier an explanation consisting of a decision rule, explaining the factual reasons of the decision, and a set of counterfactuals, suggesting the changes in the instance features that would lead to a different outcome. Experimental results show that the proposed method outperforms existing approaches in terms of the quality of the explanations and of the accuracy in mimicking the black box.

3.

[SGM2021]

Setzu Mattia, Guidotti Riccardo, Monreale Anna, Turini Franco, Pedreschi Dino, Giannotti Fosca (2021) - Artificial Intelligence. In Artificial Intelligence

Abstract

Artificial Intelligence (AI) has come to prominence as one of the major components of our society, with applications in most aspects of our lives. In this field, complex and highly nonlinear machine learning models such as ensemble models, deep neural networks, and Support Vector Machines have consistently shown remarkable accuracy in solving complex tasks. Although accurate, AI models often are “black boxes” which we are not able to understand. Relying on these models has a multifaceted impact and raises significant concerns about their transparency. Applications in sensitive and critical domains are a strong motivational factor in trying to understand the behavior of black boxes. We propose to address this issue by providing an interpretable layer on top of black box models by aggregating “local” explanations. We present GLocalX, a “local-first” model agnostic explanation method. Starting from local explanations expressed in form of local decision rules, GLocalX iteratively generalizes them into global explanations by hierarchically aggregating them. Our goal is to learn accurate yet simple interpretable models to emulate the given black box, and, if possible, replace it entirely. We validate GLocalX in a set of experiments in standard and constrained settings with limited or no access to either data or local explanations. Experiments show that GLocalX is able to accurately emulate several models with simple and small models, reaching state-of-the-art performance against natively global solutions. Our findings show how it is often possible to achieve a high level of both accuracy and comprehensibility of classification models, even in complex domains with high-dimensional data, without necessarily trading one property for the other. This is a key requirement for a trustworthy AI, necessary for adoption in high-stakes decision making applications.

4.

[GMR2018a]Guidotti Riccardo, Monreale Anna, Ruggieri Salvatore , Pedreschi Dino, Turini Franco , Giannotti Fosca (2018) - Arxive preprint

Abstract

The recent years have witnessed the rise of accurate but obscure decision systems which hide the logic of their internal decision processes to the users. The lack of explanations for the decisions of black box systems is a key ethical issue, and a limitation to the adoption of achine learning components in socially sensitive and safety-critical contexts. Therefore, we need explanations that reveals the reasons why a predictor takes a certain decision. In this paper we focus on the problem of black box outcome explanation, i.e., explaining the reasons of the decision taken on a specific instance. We propose LORE, an agnostic method able to provide interpretable and faithful explanations. LORE first leans a local interpretable predictor on a synthetic neighborhood generated by a genetic algorithm. Then it derives from the logic of the local interpretable predictor a meaningful explanation consisting of: a decision rule, which explains the reasons of the decision; and a set of counterfactual rules, suggesting the changes in the instance's features that lead to a different outcome. Wide experiments show that LORE outperforms existing methods and baselines both in the quality of explanations and in the accuracy in mimicking the black box.

5.

[CGG2023]Martina Cinquini, Fosca Giannotti, Riccardo Guidotti, Andrea Mattei (2023) - Explainable Artificial Intelligence. First World Conference, xAI 2023

Abstract

Missing data are quite common in real scenarios when using Artificial Intelligence (AI) systems for decision-making with tabular data and effectively handling them poses a significant challenge for such systems. While some machine learning models used by AI systems can tackle this problem, the existing literature lacks post-hoc explainability approaches able to deal with predictors that encounter missing data. In this paper, we extend a widely used local model-agnostic post-hoc explanation approach that enables explainability in the presence of missing values by incorporating state-of-the-art imputation methods within the explanation process. Since our proposal returns explanations in the form of feature importance, the user will be aware also of the importance of a missing value in a given record for a particular prediction. Extensive experiments show the effectiveness of the proposed method with respect to some baseline solutions relying on traditional data imputation.

6.

[SGM2023]Francesco Spinnato, Riccardo Guidotti, Anna Monreale, Mirco Nanni, Dino Pedreschi, Fosca Giannotti (2023) - ACM Transactions on Knowledge Discovery from Data

Abstract

The growing availability of time series data has increased the usage of classifiers for this data type. Unfortunately, state-of-the-art time series classifiers are black-box models and, therefore, not usable in critical domains such as healthcare or finance, where explainability can be a crucial requirement. This paper presents a framework to explain the predictions of any black-box classifier for univariate and multivariate time series. The provided explanation is composed of three parts. First, a saliency map highlighting the most important parts of the time series for the classification. Second, an instance-based explanation exemplifies the black-box’s decision by providing a set of prototypical and counterfactual time series. Third, a factual and counterfactual rule-based explanation, revealing the reasons for the classification through logical conditions based on subsequences that must, or must not, be contained in the time series. Experiments and benchmarks show that the proposed method provides faithful, meaningful, stable, and interpretable explanations.

7.

[PSG2023]Mattia Poggioli, Francesco Spinnato, Riccardo Guidotti (2023) - Proceedings of the 25th international conference on Discovery Science (DS), 2022, Montpellier. In Lecture Notes in Computer Science()

Abstract

Time Series Analysis (TSA) and Natural Language Processing (NLP) are two domains of research that have seen a surge of interest in recent years. NLP focuses mainly on enabling computers to manipulate and generate human language, whereas TSA identifies patterns or components in time-dependent data. Given their different purposes, there has been limited exploration of combining them. In this study, we present an approach to convert text into time series to exploit TSA for exploring text properties and to make NLP approaches interpretable for humans. We formalize our Text to Time Series framework as a feature extraction and aggregation process, proposing a set of different conversion alternatives for each step. We experiment with our approach on several textual datasets, showing the conversion approach’s performance and applying it to the field of interpretable time series classification.

8.

[BGG2023]Francesco Bodria, Fosca Giannotti, Riccardo Guidotti, Francesca Naretto, Dino Pedreschi, Salvatore Rinzivillo (2023) - Springer Science+Business Media, LLC, part of Springer Nature. In Data Mining and Knowledge Discovery

Abstract

The rise of sophisticated black-box machine learning models in Artificial Intelligence systems has prompted the need for explanation methods that reveal how these models work in an understandable way to users and decision makers. Unsurprisingly, the state-of-the-art exhibits currently a plethora of explainers providing many different types of explanations. With the aim of providing a compass for researchers and practitioners, this paper proposes a categorization of explanation methods from the perspective of the type of explanation they return, also considering the different input data formats. The paper accounts for the most representative explainers to date, also discussing similarities and discrepancies of returned explanations through their visual appearance. A companion website to the paper is provided as a continuous update to new explainers as they appear. Moreover, a subset of the most robust and widely adopted explainers, are benchmarked with respect to a repertoire of quantitative metrics.

9.

[MBG2023]Carlo Metta, Andrea Beretta, Riccardo Guidotti, Yuan Yin, Patrick Gallinari, Salvatore Rinzivillo, Fosca Giannotti (2023) - Springer Nature. In International Journal of Data Science and Analytics

Abstract

A key issue in critical contexts such as medical diagnosis is the interpretability of the deep learning models adopted in decision-making systems. Research in eXplainable Artificial Intelligence (XAI) is trying to solve this issue. However, often XAI approaches are only tested on generalist classifier and do not represent realistic problems such as those of medical diagnosis. In this paper, we aim at improving the trust and confidence of users towards automatic AI decision systems in the field of medical skin lesion diagnosis by customizing an existing XAI approach for explaining an AI model able to recognize different types of skin lesions. The explanation is generated through the use of synthetic exemplar and counter-exemplar images of skin lesions and our contribution offers the practitioner a way to highlight the crucial traits responsible for the classification decision. A validation survey with domain experts, beginners, and unskilled people shows that the use of explanations improves trust and confidence in the automatic decision system. Also, an analysis of the latent space adopted by the explainer unveils that some of the most frequent skin lesion classes are distinctly separated. This phenomenon may stem from the intrinsic characteristics of each class and may help resolve common misclassifications made by human experts.

12.

[LSG2023]Landi Cristiano,Spinnato Francesco, Guidotti Riccardo, Monreale Anna, Nanni Mirco (2023) - International Symposium on Intelligent Data Analysis. In Proceedings of the 2023 conference Advances in Intelligent Data Analysis XXI

Abstract

The large and diverse availability of mobility data enables the development of predictive models capable of recognizing various types of movements. Through a variety of GPS devices, any moving entity, animal, person, or vehicle can generate spatio-temporal trajectories. This data is used to infer migration patterns, manage traffic in large cities, and monitor the spread and impact of diseases, all critical situations that necessitate a thorough understanding of the underlying problem. Researchers, businesses, and governments use mobility data to make decisions that affect people’s lives in many ways, employing accurate but opaque deep learning models that are difficult to interpret from a human standpoint. To address these limitations, we propose Geolet, a human-interpretable machine-learning model for trajectory classification. We use discriminative sub-trajectories extracted from mobility data to turn trajectories into a simplified representation that can be used as input by any machine learning classifier. We test our approach against state-of-the-art competitors on real-world datasets. Geolet outperforms black-box models in terms of accuracy while being orders of magnitude faster than its interpretable competitors.

13.

[LCM2023]Liguori Angelica, Caroprese Luciano, Minici Marco, Veloso Bruno, Spinnato Francesco, Nanni Mirco, Manco Giuseppe, Gama Joao (2023) - Arxive preprint

Abstract

In real-world scenario, many phenomena produce a collection of events that occur in continuous time. Point Processes provide a natural mathematical framework for modeling these sequences of events. In this survey, we investigate probabilistic models for modeling event sequences through temporal processes. We revise the notion of event modeling and provide the mathematical foundations that characterize the literature on the topic. We define an ontology to categorize the existing approaches in terms of three families: simple, marked, and spatio-temporal point processes. For each family, we systematically review the existing approaches based based on deep learning. Finally, we analyze the scenarios where the proposed techniques can be used for addressing prediction and modeling aspects.

15.

[BGG2023c]Francesco Bodria, Riccardo Guidotti, Fosca Giannotti & Dino Pedreschi (2022) - Proceedings of the 25th international conference on Discovery Science (DS), 2022, Montpellier. In Lecture Notes in Computer Science()

Abstract

Many dimensionality reduction methods have been introduced to map a data space into one with fewer features and enhance machine learning models’ capabilities. This reduced space, called latent space, holds properties that allow researchers to understand the data better and produce better models. This work proposes an interpretable latent space that preserves the similarity of data points and supports a new way of learning a classification model that allows prediction and explanation through counterfactual examples. We demonstrate with extensive experiments the effectiveness of the latent space with respect to different metrics in comparison with several competitors, as well as the quality of the achieved counterfactual explanations.

16.

[BGG2023b]Bodria Francesco, Riccardo Guidotti, Fosca Giannotti, Dino Pedreschi (2022) - Proceedings of Data Science and Advanced Analytics (DSAA), 2022 IEEE 9th International Conference. In Proceedings of the 9th IEEE International Conference on Data Science and Advanced, Analytics (DSAA)

Abstract

Artificial Intelligence decision-making systems have dramatically increased their predictive performance in recent years, beating humans in many different specific tasks. However, with increased performance has come an increase in the complexity of the black-box models adopted by the AI systems, making them entirely obscure for the decision process adopted. Explainable AI is a field that seeks to make AI decisions more transparent by producing explanations. In this paper, we propose T-LACE, an approach able to retrieve post-hoc counterfactual explanations for a given pre-trained black-box model. T-LACE exploits the similarity and linearity proprieties of a custom-created transparent latent space to build reliable counterfactual explanations. We tested T-LACE on several tabular datasets and provided qualitative evaluations of the generated explanations in terms of similarity, robustness, and diversity. Comparative analysis against various state-of-the-art counterfactual explanation methods shows the higher effectiveness of our approach.

23.

[TSS2022]Andreas Theissler, Francesco Spinnato, Udo Schlegel, Riccardo Guidotti (2022) - IEEE Access. In IEEE Access ( Volume: 10)

Abstract

Time series data is increasingly used in a wide range of fields, and it is often relied on in crucial applications and high-stakes decision-making. For instance, sensors generate time series data to recognize different types of anomalies through automatic decision-making systems. Typically, these systems are realized with machine learning models that achieve top-tier performance on time series classification tasks. Unfortunately, the logic behind their prediction is opaque and hard to understand from a human standpoint. Recently, we observed a consistent increase in the development of explanation methods for time series classification justifying the need to structure and review the field. In this work, we (a) present the first extensive literature review on Explainable AI (XAI) for time series classification, (b) categorize the research field through a taxonomy subdividing the methods into time points-based, subsequences-based and instance-based, and (c) identify open research directions regarding the type of explanations and the evaluation of explanations and interpretability.

24.

[G2022]Riccardo Guidotti (2022) - Data Mining and Knowledge Discovery. In Data Mining and Knowledge Discovery

Abstract

Interpretable machine learning aims at unveiling the reasons behind predictions returned by uninterpretable classifiers. One of the most valuable types of explanation consists of counterfactuals. A counterfactual explanation reveals what should have been different in an instance to observe a diverse outcome. For instance, a bank customer asks for a loan that is rejected. The counterfactual explanation consists of what should have been different for the customer in order to have the loan accepted. Recently, there has been an explosion of proposals for counterfactual explainers. The aim of this work is to survey the most recent explainers returning counterfactual explanations. We categorize explainers based on the approach adopted to return the counterfactuals, and we label them according to characteristics of the method and properties of the counterfactuals returned. In addition, we visually compare the explanations, and we report quantitative benchmarking assessing minimality, actionability, stability, diversity, discriminative power, and running time. The results make evident that the current state of the art does not provide a counterfactual explainer able to guarantee all these properties simultaneously.

25.

[MG2022]Marta Marchiori Manerba, Guidotti Riccardo (2022) - Conference on AI, Ethics, and Society (AIES 2022). In Proceedings of the 2022 AAAI/ACM Conference on AI, Ethics, and Society (AIES'22)

Abstract

During each stage of a dataset creation and development process, harmful biases can be accidentally introduced, leading to models that perpetuates marginalization and discrimination of minorities, as the role of the data used during the training is critical. We propose an evaluation framework that investigates the impact on classification and explainability of bias mitigation preprocessing techniques used to assess data imbalances concerning minorities' representativeness and mitigate the skewed distributions discovered. Our evaluation focuses on assessing fairness, explainability and performance metrics. We analyze the behavior of local model-agnostic explainers on the original and mitigated datasets to examine whether the proxy models learned by the explainability techniques to mimic the black-boxes disproportionately rely on sensitive attributes, demonstrating biases rooted in the explainers. We conduct several experiments about known biased datasets to demonstrate our proposal’s novelty and effectiveness for evaluation and bias detection purposes.

Research Line 1▪5

27.

[PBF2022]Panigutti Cecilia, Beretta Andrea, Fadda Daniele , Giannotti Fosca, Pedreschi Dino, Perotti Alan, Rinzivillo Salvatore (2022). In ACM Transactions on Interactive Intelligent Systems

Abstract

eXplainable AI (XAI) involves two intertwined but separate challenges: the development of techniques to extract explanations from black-box AI models, and the way such explanations are presented to users, i.e., the explanation user interface. Despite its importance, the second aspect has received limited attention so far in the literature. Effective AI explanation interfaces are fundamental for allowing human decision-makers to take advantage and oversee high-risk AI systems effectively. Following an iterative design approach, we present the first cycle of prototyping-testing-redesigning of an explainable AI technique, and its explanation user interface for clinical Decision Support Systems (DSS). We first present an XAI technique that meets the technical requirements of the healthcare domain: sequential, ontology-linked patient data, and multi-label classification tasks. We demonstrate its applicability to explain a clinical DSS, and we design a first prototype of an explanation user interface. Next, we test such a prototype with healthcare providers and collect their feedback, with a two-fold outcome: first, we obtain evidence that explanations increase users' trust in the XAI system, and second, we obtain useful insights on the perceived deficiencies of their interaction with the system, so that we can re-design a better, more human-centered explanation interface.

Research Line 1▪3▪4

29.

[SMM2022]Setzu Mattia, Monreale Anna, Minervini Pasquale (2022) - Third Conference on Cognitive Machine Intelligence (COGMI) 2021

Abstract

nan

Research Line 1▪2

33.

[MGY2021]Carlo Metta, Riccardo Guidotti, Yuan Yin, Patrick Gallinari, Salvatore Rinzivillo (2021) - IOS Press. In HHAI2022: Augmenting Human Intellect, S. Schlobach et al. (Eds.)

Abstract

Explainable AI consists in developing models allowing interaction between decision systems and humans by making the decisions understandable. We propose a case study for skin lesion diagnosis showing how it is possible to provide explanations of the decisions of deep neural network trained to label skin lesions.

34.

[MG2021]Marchiori Manerba Marta, Guidotti Riccardo (2021) - Third Conference on Cognitive Machine Intelligence (COGMI) 2021. In 2021 IEEE Third International Conference on Cognitive Machine Intelligence (CogMI)

Abstract

At every stage of a supervised learning process, harmful biases can arise and be inadvertently introduced, ultimately leading to marginalization, discrimination, and abuse towards minorities. This phenomenon becomes particularly impactful in the sensitive real-world context of abusive language detection systems, where non-discrimination is difficult to assess. In addition, given the opaqueness of their internal behavior, the dynamics leading a model to a certain decision are often not clear nor accountable, and significant problems of trust could emerge. A robust value-oriented evaluation of models' fairness is therefore necessary. In this paper, we present FairShades, a model-agnostic approach for auditing the outcomes of abusive language detection systems. Combining explainability and fairness evaluation, FairShades can identify unintended biases and sensitive categories towards which models are most discriminative. This objective is pursued through the auditing of meaningful counterfactuals generated within CheckList framework. We conduct several experiments on BERT-based models to demonstrate our proposal's novelty and effectiveness for unmasking biases.

35.

[GMP2021]

Guidotti Riccardo, Monreale Anna, Pedreschi Dino, Giannotti Fosca (2021) - Explainable AI Within the Digital Transformation and Cyber Physical Systems (pp. 9-31)

Abstract

This book presents Explainable Artificial Intelligence (XAI), which aims at producing explainable models that enable human users to understand and appropriately trust the obtained results. The authors discuss the challenges involved in making machine learning-based AI explainable. Firstly, that the explanations must be adapted to different stakeholders (end-users, policy makers, industries, utilities etc.) with different levels of technical knowledge (managers, engineers, technicians, etc.) in different application domains. Secondly, that it is important to develop an evaluation framework and standards in order to measure the effectiveness of the provided explanations at the human and the technical levels. This book gathers research contributions aiming at the development and/or the use of XAI techniques in order to address the aforementioned challenges in different applications such as healthcare, finance, cybersecurity, and document summarization. It allows highlighting the benefits and requirements of using explainable models in different application domains in order to provide guidance to readers to select the most adapted models to their specified problem and conditions. Includes recent developments of the use of Explainable Artificial Intelligence (XAI) in order to address the challenges of digital transition and cyber-physical systems; Provides a textual scientific description of the use of XAI in order to address the challenges of digital transition and cyber-physical systems; Presents examples and case studies in order to increase transparency and understanding of the methodological concepts.

36.

[GD2021]Guidotti Riccardo, D’Onofrio Matteo (2021) - Frontiers in Artificial Intelligence

Abstract

Time series classification (TSC) is a pervasive and transversal problem in various fields ranging from disease diagnosis to anomaly detection in finance. Unfortunately, the most effective models used by Artificial Intelligence (AI) systems for TSC are not interpretable and hide the logic of the decision process, making them unusable in sensitive domains. Recent research is focusing on explanation methods to pair with the obscure classifier to recover this weakness. However, a TSC approach that is transparent by design and is simultaneously efficient and effective is even more preferable. To this aim, we propose an interpretable TSC method based on the patterns, which is possible to extract from the Matrix Profile (MP) of the time series in the training set. A smart design of the classification procedure allows obtaining an efficient and effective transparent classifier modeled as a decision tree that expresses the reasons for the classification as the presence of discriminative subsequences. Quantitative and qualitative experimentation shows that the proposed method overcomes the state-of-the-art interpretable approaches.

38.

[GM2021]Guidotti Riccardo, Monreale Anna (2021) - Proceedings of the 2021 AAAI/ACM Conference on AI, Ethics, and Society

Abstract

Time series shapelets are discriminatory subsequences which are representative of a class, and their similarity to a time series can be used for successfully tackling the time series classification problem. The literature shows that Artificial Intelligence (AI) systems adopting classification models based on time series shapelets can be interpretable, more accurate, and significantly fast. Thus, in order to design a data-agnostic and interpretable classification approach, in this paper we first extend the notion of shapelets to different types of data, i.e., images, tabular and textual data. Then, based on this extended notion of shapelets we propose an interpretable data-agnostic classification method. Since the shapelets discovery can be time consuming, especially for data types more complex than time series, we exploit a notion of prototypes for finding candidate shapelets, and reducing both the time required to find a solution and the variance of shapelets. A wide experimentation on datasets of different types shows that the data-agnostic prototype-based shapelets returned by the proposed method empower an interpretable classification which is also fast, accurate, and stable. In addition, we show and we prove that shapelets can be at the basis of explainable AI methods.

39.

[MGY2021]Metta Carlo, Guidotti Riccardo, Yin Yuan, Gallinari Patrick, Rinzivillo Salvatore (2021) - 2021 IEEE Symposium on Computers and Communications (ISCC). In 2021 IEEE Symposium on Computers and Communications (ISCC)

Abstract

Explainable AI consists in developing mechanisms allowing for an interaction between decision systems and humans by making the decisions of the formers understandable. This is particularly important in sensitive contexts like in the medical domain. We propose a use case study, for skin lesion diagnosis, illustrating how it is possible to provide the practitioner with explanations on the decisions of a state of the art deep neural network classifier trained to characterize skin lesions from examples. Our framework consists of a trained classifier onto which an explanation module operates. The latter is able to offer the practitioner exemplars and counterexemplars for the classification diagnosis thus allowing the physician to interact with the automatic diagnosis system. The exemplars are generated via an adversarial autoencoder. We illustrate the behavior of the system on representative examples.

40.

[GR2021]Guidotti Riccardo, Ruggieri Salvatore (2021)

Abstract

In eXplainable Artificial Intelligence (XAI), several counterfactual explainers have been proposed, each focusing on some desirable properties of counterfactual instances: minimality, actionability, stability, diversity, plausibility, discriminative power. We propose an ensemble of counterfactual explainers that boosts weak explainers, which provide only a subset of such properties, to a powerful method covering all of them. The ensemble runs weak explainers on a sample of instances and of features, and it combines their results by exploiting a diversity-driven selection function. The method is model-agnostic and, through a wrapping approach based on autoencoders, it is also data-agnostic

41.

[MBG2021]Metta Carlo, Beretta Andrea, Guidotti Riccardo, Yin Yuan, Gallinari Patrick, Rinzivillo Salvatore, Giannotti Fosca (2021) - Arxive preprint. In International Journal of Data Science and Analytics

Abstract

A key issue in critical contexts such as medical diagnosis is the interpretability of the deep learning models adopted in decision-making systems. Research in eXplainable Artificial Intelligence (XAI) is trying to solve this issue. However, often XAI approaches are only tested on generalist classifier and do not represent realistic problems such as those of medical diagnosis. In this paper, we analyze a case study on skin lesion images where we customize an existing XAI approach for explaining a deep learning model able to recognize different types of skin lesions. The explanation is formed by synthetic exemplar and counter-exemplar images of skin lesion and offers the practitioner a way to highlight the crucial traits responsible for the classification decision. A survey conducted with domain experts, beginners and unskilled people proof that the usage of explanations increases the trust and confidence in the automatic decision system. Also, an analysis of the latent space adopted by the explainer unveils that some of the most frequent skin lesion classes are distinctly separated. This phenomenon could derive from the intrinsic characteristics of each class and, hopefully, can provide support in the resolution of the most frequent misclassifications by human experts.

42.

[BGM2021]Bonsignori Valerio, Guidotti Riccardo, Monreale Anna (2021) - Discovery Science

Abstract

Decision tree classifiers have been proved to be among the most interpretable models due to their intuitive structure that illustrates decision processes in form of logical rules. Unfortunately, more complex tree-based classifiers such as oblique trees and random forests overcome the accuracy of decision trees at the cost of becoming non interpretable. In this paper, we propose a method that takes as input any tree-based classifier and returns a single decision tree able to approximate its behavior. Our proposal merges tree-based classifiers by an intensional and extensional approach and applies a post-hoc explanation strategy. Our experiments shows that the retrieved single decision tree is at least as accurate as the original tree-based model, faithful, and more interpretable.

45.

[PPB2021]

Panigutti Cecilia, Perotti Alan, Panisson André, Bajardi Paolo, Pedreschi Dino (2021) - Information Processing & Management. In Journal of Information Processing and Management

Abstract

Highlights: We present a pipeline to detect and explain potential fairness issues in Clinical DSS. We study and compare different multi-label classification disparity measures. We explore ICD9 bias in MIMIC-IV, an openly available ICU benchmark dataset

48.

[SGM2019]Setzu Mattia, Guidotti Riccardo, Monreale Anna, Turini Franco (2021) - Machine Learning and Knowledge Discovery in Databases. In ECML PKDD 2019: Machine Learning and Knowledge Discovery in Databases

Abstract

Artificial Intelligence systems often adopt machine learning models encoding complex algorithms with potentially unknown behavior. As the application of these “black box” models grows, it is our responsibility to understand their inner working and formulate them in human-understandable explanations. To this end, we propose a rule-based model-agnostic explanation method that follows a local-to-global schema: it generalizes a global explanation summarizing the decision logic of a black box starting from the local explanations of single predicted instances. We define a scoring system based on a rule relevance score to extract global explanations from a set of local explanations in the form of decision rules. Experiments on several datasets and black boxes show the stability, and low complexity of the global explanations provided by the proposed solution in comparison with baselines and state-of-the-art global explainers.

51.

[PGG2019]Pedreschi Dino, Giannotti Fosca, Guidotti Riccardo, Monreale Anna, Ruggieri Salvatore, Turini Franco (2021) - Proceedings of the AAAI Conference on Artificial Intelligence. In Proceedings of the AAAI Conference on Artificial Intelligence

Abstract

Black box AI systems for automated decision making, often based on machine learning over (big) data, map a user’s features into a class or a score without exposing the reasons why. This is problematic not only for lack of transparency, but also for possible biases inherited by the algorithms from human prejudices and collection artifacts hidden in the training data, which may lead to unfair or wrong decisions. We focus on the urgent open challenge of how to construct meaningful explanations of opaque AI/ML systems, introducing the local-toglobal framework for black box explanation, articulated along three lines: (i) the language for expressing explanations in terms of logic rules, with statistical and causal interpretation; (ii) the inference of local explanations for revealing the decision rationale for a specific case, by auditing the black box in the vicinity of the target instance; (iii), the bottom-up generalization of many local explanations into simple global ones, with algorithms that optimize for quality and comprehensibility. We argue that the local-first approach opens the door to a wide variety of alternative solutions along different dimensions: a variety of data sources (relational, text, images, etc.), a variety of learning problems (multi-label classification, regression, scoring, ranking), a variety of languages for expressing meaningful explanations, a variety of means to audit a black box.

52.

[G2021]Guidotti Riccardo (2021) - Artificial Intelligence. In Artificial Intelligence, 103428

Abstract

Evaluating local explanation methods is a difficult task due to the lack of a shared and universally accepted definition of explanation. In the literature, one of the most common ways to assess the performance of an explanation method is to measure the fidelity of the explanation with respect to the classification of a black box model adopted by an Artificial Intelligent system for making a decision. However, this kind of evaluation only measures the degree of adherence of the local explainer in reproducing the behavior of the black box classifier with respect to the final decision. Therefore, the explanation provided by the local explainer could be different in the content even though it leads to the same decision of the AI system. In this paper, we propose an approach that allows to measure to which extent the explanations returned by local explanation methods are correct with respect to a synthetic ground truth explanation. Indeed, the proposed methodology enables the generation of synthetic transparent classifiers for which the reason for the decision taken, i.e., a synthetic ground truth explanation, is available by design. Experimental results show how the proposed approach allows to easily evaluate local explanations on the ground truth and to characterize the quality of local explanation methods.

53.

[LGR2020]Lampridis Orestis, Guidotti Riccardo, Ruggieri Salvatore (2021) - Discovery Science. In In International Conference on Discovery Science (pp. 357-373). Springer, Cham.

Abstract

We present xspells, a model-agnostic local approach for explaining the decisions of a black box model for sentiment classification of short texts. The explanations provided consist of a set of exemplar sentences and a set of counter-exemplar sentences. The former are examples classified by the black box with the same label as the text to explain. The latter are examples classified with a different label (a form of counter-factuals). Both are close in meaning to the text to explain, and both are meaningful sentences – albeit they are synthetically generated. xspells generates neighbors of the text to explain in a latent space using Variational Autoencoders for encoding text and decoding latent instances. A decision tree is learned from randomly generated neighbors, and used to drive the selection of the exemplars and counter-exemplars. We report experiments on two datasets showing that xspells outperforms the well-known lime method in terms of quality of explanations, fidelity, and usefulness, and that is comparable to it in terms of stability.

54.

[PGM2019]

Panigutti Cecilia, Guidotti Riccardo, Monreale Anna, Pedreschi Dino (2021) - Precision Health and Medicine. In International Workshop on Health Intelligence (pp. 97-110). Springer, Cham.

Abstract

Today the state-of-the-art performance in classification is achieved by the so-called “black boxes”, i.e. decision-making systems whose internal logic is obscure. Such models could revolutionize the health-care system, however their deployment in real-world diagnosis decision support systems is subject to several risks and limitations due to the lack of transparency. The typical classification problem in health-care requires a multi-label approach since the possible labels are not mutually exclusive, e.g. diagnoses. We propose MARLENA, a model-agnostic method which explains multi-label black box decisions. MARLENA explains an individual decision in three steps. First, it generates a synthetic neighborhood around the instance to be explained using a strategy suitable for multi-label decisions. It then learns a decision tree on such neighborhood and finally derives from it a decision rule that explains the black box decision. Our experiments show that MARLENA performs well in terms of mimicking the black box behavior while gaining at the same time a notable amount of interpretability through compact decision rules, i.e. rules with limited length.

55.

[GMC2019]Guidotti Riccardo, Monreale Anna, Cariaggi Leonardo (2021) - Advances in Knowledge Discovery and Data Mining. In In Pacific-Asia Conference on Knowledge Discovery and Data Mining (pp. 55-68). Springer, Cham.

Abstract

Given the wide use of machine learning approaches based on opaque prediction models, understanding the reasons behind decisions of black box decision systems is nowadays a crucial topic. We address the problem of providing meaningful explanations in the widely-applied image classification tasks. In particular, we explore the impact of changing the neighborhood generation function for a local interpretable model-agnostic explanator by proposing four different variants. All the proposed methods are based on a grid-based segmentation of the images, but each of them proposes a different strategy for generating the neighborhood of the image for which an explanation is required. A deep experimentation shows both improvements and weakness of each proposed approach.

56.

[GMS2020]

Guidotti Riccardo, Monreale Anna, Spinnato Francesco, Pedreschi Dino, Giannotti Fosca (2020) - 2020 IEEE Second International Conference on Cognitive Machine Intelligence (CogMI)

Abstract

We present a method to explain the decisions of black box models for time series classification. The explanation consists of factual and counterfactual shapelet-based rules revealing the reasons for the classification, and of a set of exemplars and counter-exemplars highlighting similarities and differences with the time series under analysis. The proposed method first generates exemplar and counter-exemplar time series in the latent feature space and learns a local latent decision tree classifier. Then, it selects and decodes those respecting the decision rules explaining the decision. Finally, it learns on them a shapelet-tree that reveals the parts of the time series that must, and must not, be contained for getting the returned outcome from the black box. A wide experimentation shows that the proposed method provides faithful, meaningful and interpretable explanations.

57.

[PPP2020]

Panigutti Cecilia, Perotti Alan, Pedreschi Dino (2020) - FAT* '20: Proceedings of the 2020 Conference on Fairness, Accountability, and Transparency. In FAT* '20: Proceedings of the 2020 Conference on Fairness, Accountability, and Transparency

Abstract

Several recent advancements in Machine Learning involve blackbox models: algorithms that do not provide human-understandable explanations in support of their decisions. This limitation hampers the fairness, accountability and transparency of these models; the field of eXplainable Artificial Intelligence (XAI) tries to solve this problem providing human-understandable explanations for black-box models. However, healthcare datasets (and the related learning tasks) often present peculiar features, such as sequential data, multi-label predictions, and links to structured background knowledge. In this paper, we introduce Doctor XAI, a model-agnostic explainability technique able to deal with multi-labeled, sequential, ontology-linked data. We focus on explaining Doctor AI, a multilabel classifier which takes as input the clinical history of a patient in order to predict the next visit. Furthermore, we show how exploiting the temporal dimension in the data and the domain knowledge encoded in the medical ontology improves the quality of the mined explanations.

58.

[RGG2020]Ruggieri Salvatore, Giannotti Fosca, Guidotti Riccardo, Monreale Anna, Pedreschi Dino, Turini Franco (2020). In ANNUARIO DI DIRITTO COMPARATO E DI STUDI LEGISLATIVI

Abstract

The pervasive adoption of Artificial Intelligence (AI) models in the modern information society, requires counterbalancing the growing decision power demanded to AI models with risk assessment methodologies. In this paper, we consider the risk of discriminatory decisions and review approaches for discovering discrimination and for designing fair AI models. We highlight the tight relations between discrimination discovery and explainable AI, with the latter being a more general approach for understanding the behavior of black boxes.

59.

[GM2020]Guidotti Riccardo, Monreale Anna (2020) - 2020 IEEE International Conference on Data Mining (ICDM). In 2020 IEEE International Conference on Data Mining (ICDM)

Abstract

Synthetic data generation has been widely adopted in software testing, data privacy, imbalanced learning, machine learning explanation, etc. In such contexts, it is important to generate data samples located within “local” areas surrounding specific instances. Local synthetic data can help the learning phase of predictive models, and it is fundamental for methods explaining the local behavior of obscure classifiers. The contribution of this paper is twofold. First, we introduce a method based on generative operators allowing the synthetic neighborhood generation by applying specific perturbations on a given input instance. The key factor consists in performing a data transformation that makes applicable to any type of data, i.e., data-agnostic. Second, we design a framework for evaluating the goodness of local synthetic neighborhoods exploiting both supervised and unsupervised methodologies. A deep experimentation shows the effectiveness of the proposed method.

60.

[BPP2020]Bodria Francesco, Panisson André , Perotti Alan, Piaggesi Simone (2020) - Discussion Paper

Abstract

nan

Research Line 1

63.

[GMP2019]Guidotti Riccardo, Monreale Anna, Pedreschi Dino (2019) - ERCIM News, 116, 12-13. In ERCIM News, 116, 12-13

Abstract

nan

Research Line 1▪2▪3

64.

[PGG2018]Pedreschi Dino, Giannotti Fosca, Guidotti Riccardo, Monreale Anna , Pappalardo Luca , Ruggieri Salvatore , Turini Franco (2018) - Arxive preprint

Abstract

Black box systems for automated decision making, often based on machine learning over (big) data, map a user's features into a class or a score without exposing the reasons why. This is problematic not only for lack of transparency, but also for possible biases hidden in the algorithms, due to human prejudices and collection artifacts hidden in the training data, which may lead to unfair or wrong decisions. We introduce the local-to-global framework for black box explanation, a novel approach with promising early results, which paves the road for a wide spectrum of future developments along three dimensions: (i) the language for expressing explanations in terms of highly expressive logic-based rules, with a statistical and causal interpretation; (ii) the inference of local explanations aimed at revealing the logic of the decision adopted for a specific instance by querying and auditing the black box in the vicinity of the target instance; (iii), the bottom-up generalization of the many local explanations into simple global ones, with algorithms that optimize the quality and comprehensibility of explanations.

Researchers working on this line

Riccardo

Guidotti

University of Pisa

R. line 1 ▪ 3 ▪ 4 ▪ 5

Franco

Turini

University of Pisa

R. line 1 ▪ 2 ▪ 5

Salvatore

Ruggieri

University of Pisa

R. line 1 ▪ 2

Mirco

Nanni

ISTI - CNR Pisa

R. line 1 ▪ 4

Salvo

Rinzivillo

ISTI - CNR Pisa

R. line 1 ▪ 3 ▪ 4 ▪ 5

Andrea

Beretta

ISTI - CNR Pisa

R. line 1 ▪ 4 ▪ 5

Anna

Monreale

University of Pisa

R. line 1 ▪ 4 ▪ 5

Cecilia

Panigutti

Scuola Normale

R. line 1 ▪ 4 ▪ 5

Mattia

Setzu

University of Pisa

R. line 1 ▪ 2

Francesco

Spinnato

Scuola Normale

R. line 1 ▪ 4

Francesca

Naretto

Scuola Normale

R. line 1 ▪ 3 ▪ 4 ▪ 5

Francesco

Bodria

Scuola Normale

R. line 1 ▪ 3

Carlo

Metta

ISTI - CNR Pisa

R. line 1 ▪ 2 ▪ 3 ▪4

Alessio

Malizia

University of Pisa

R. line 1 ▪ 3 ▪ 4

Marta

Marchiori Manerba

University of Pisa

R. line 1 ▪ 2 ▪ 5

Martina

Cinquini

University of Pisa

R. line 1 ▪ 2

Cristiano

Landi

University of Pisa

R. line 1

Samuele

Tonati

University of Pisa

R. line 4

Anna

Arias Duart

University of Pisa

R. line 1

Andrea

Fedele

University of Pisa

R. line 1

Federico

Mazzoni

University of Pisa

R. line 1 ▪ 4

Clara

Punzi

Scuola Normale

R. line 1 ▪ 5

Andrea

Pugnana

Scuola Normale

R. line 2

Margherita

Lalli

IMT Lucca

R. line 1

Marzio

Di Vece

IMT Lucca

R. line 1