Fosca Giannotti

Role: Principal Investigator

Affiliation: Scuola Normale

Fosca Giannotti is Full Professor at Scuola Normale Superiore, Pisa, Italy. Fosca Giannotti is a pioneering scientist in mobility data mining, social network analysis and privacy-preserving data mining. Fosca leads the Pisa KDD Lab - Knowledge Discovery and Data Mining Laboratory, a joint research initiative of the University of Pisa and ISTI-CNR, founded in 1994 as one of the earliest research lab on data mining. Fosca's research focus is on social mining from big data: smart cities, human dynamics, social and economic networks, ethics and trust, diffusion of innovations. She is author of more than 300 papers. She has coordinated tens of European projects and industrial collaborations. Fosca is the former coordinator of SoBigData(link is external), the European research infrastructure on Big Data Analytics and Social Mining, an ecosystem of ten cutting edge European research centres providing an open platform for interdisciplinary data science and data-driven innovation. Recently she became the recipient of a prestigious ERC Advanced Grant entitled XAI – Science and technology for the explanation of AI decision making.

1.

[GMR2018]Guidotti Riccardo, Monreale Anna, Ruggieri Salvatore, Turini Franco, Giannotti Fosca, Pedreschi Dino (2022) - ACM Computing Surveys. In ACM computing surveys (CSUR), 51(5), 1-42.

Abstract

In recent years, many accurate decision support systems have been constructed as black boxes, that is as systems that hide their internal logic to the user. This lack of explanation constitutes both a practical and an ethical issue. The literature reports many approaches aimed at overcoming this crucial weakness, sometimes at the cost of sacrificing accuracy for interpretability. The applications in which black box decision systems can be used are various, and each approach is typically developed to provide a solution for a specific problem and, as a consequence, it explicitly or implicitly delineates its own definition of interpretability and explanation. The aim of this article is to provide a classification of the main problems addressed in the literature with respect to the notion of explanation and the type of black box system. Given a problem definition, a black box type, and a desired explanation, this survey should help the researcher to find the proposals more useful for his own work. The proposed classification of approaches to open black box models should also be useful for putting the many research open questions in perspective.

2.

[GMG2019]

Guidotti Riccardo, Monreale Anna, Giannotti Fosca, Pedreschi Dino, Ruggieri Salvatore, Turini Franco (2021) - IEEE Intelligent Systems. In IEEE Intelligent Systems

Abstract

The rise of sophisticated machine learning models has brought accurate but obscure decision systems, which hide their logic, thus undermining transparency, trust, and the adoption of artificial intelligence (AI) in socially sensitive and safety-critical contexts. We introduce a local rule-based explanation method, providing faithful explanations of the decision made by a black box classifier on a specific instance. The proposed method first learns an interpretable, local classifier on a synthetic neighborhood of the instance under investigation, generated by a genetic algorithm. Then, it derives from the interpretable classifier an explanation consisting of a decision rule, explaining the factual reasons of the decision, and a set of counterfactuals, suggesting the changes in the instance features that would lead to a different outcome. Experimental results show that the proposed method outperforms existing approaches in terms of the quality of the explanations and of the accuracy in mimicking the black box.

3.

[SGM2021]

Setzu Mattia, Guidotti Riccardo, Monreale Anna, Turini Franco, Pedreschi Dino, Giannotti Fosca (2021) - Artificial Intelligence. In Artificial Intelligence

Abstract

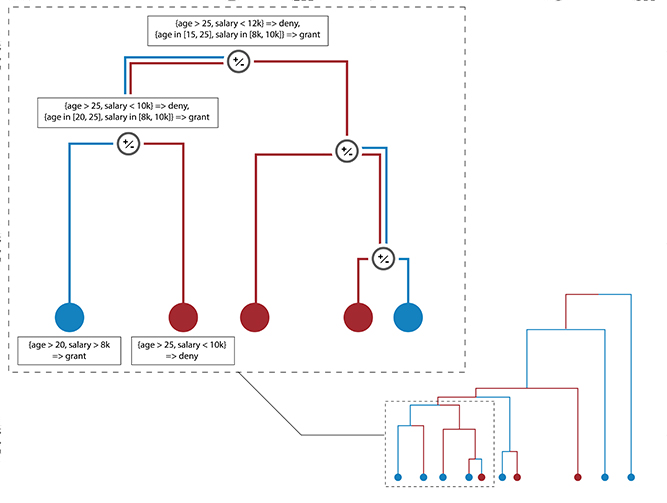

Artificial Intelligence (AI) has come to prominence as one of the major components of our society, with applications in most aspects of our lives. In this field, complex and highly nonlinear machine learning models such as ensemble models, deep neural networks, and Support Vector Machines have consistently shown remarkable accuracy in solving complex tasks. Although accurate, AI models often are “black boxes” which we are not able to understand. Relying on these models has a multifaceted impact and raises significant concerns about their transparency. Applications in sensitive and critical domains are a strong motivational factor in trying to understand the behavior of black boxes. We propose to address this issue by providing an interpretable layer on top of black box models by aggregating “local” explanations. We present GLocalX, a “local-first” model agnostic explanation method. Starting from local explanations expressed in form of local decision rules, GLocalX iteratively generalizes them into global explanations by hierarchically aggregating them. Our goal is to learn accurate yet simple interpretable models to emulate the given black box, and, if possible, replace it entirely. We validate GLocalX in a set of experiments in standard and constrained settings with limited or no access to either data or local explanations. Experiments show that GLocalX is able to accurately emulate several models with simple and small models, reaching state-of-the-art performance against natively global solutions. Our findings show how it is often possible to achieve a high level of both accuracy and comprehensibility of classification models, even in complex domains with high-dimensional data, without necessarily trading one property for the other. This is a key requirement for a trustworthy AI, necessary for adoption in high-stakes decision making applications.

4.

[GMR2018a]Guidotti Riccardo, Monreale Anna, Ruggieri Salvatore , Pedreschi Dino, Turini Franco , Giannotti Fosca (2018) - Arxive preprint

Abstract

The recent years have witnessed the rise of accurate but obscure decision systems which hide the logic of their internal decision processes to the users. The lack of explanations for the decisions of black box systems is a key ethical issue, and a limitation to the adoption of achine learning components in socially sensitive and safety-critical contexts. Therefore, we need explanations that reveals the reasons why a predictor takes a certain decision. In this paper we focus on the problem of black box outcome explanation, i.e., explaining the reasons of the decision taken on a specific instance. We propose LORE, an agnostic method able to provide interpretable and faithful explanations. LORE first leans a local interpretable predictor on a synthetic neighborhood generated by a genetic algorithm. Then it derives from the logic of the local interpretable predictor a meaningful explanation consisting of: a decision rule, which explains the reasons of the decision; and a set of counterfactual rules, suggesting the changes in the instance's features that lead to a different outcome. Wide experiments show that LORE outperforms existing methods and baselines both in the quality of explanations and in the accuracy in mimicking the black box.

5.

[CGG2023]Martina Cinquini, Fosca Giannotti, Riccardo Guidotti, Andrea Mattei (2023) - Explainable Artificial Intelligence. First World Conference, xAI 2023

Abstract

Missing data are quite common in real scenarios when using Artificial Intelligence (AI) systems for decision-making with tabular data and effectively handling them poses a significant challenge for such systems. While some machine learning models used by AI systems can tackle this problem, the existing literature lacks post-hoc explainability approaches able to deal with predictors that encounter missing data. In this paper, we extend a widely used local model-agnostic post-hoc explanation approach that enables explainability in the presence of missing values by incorporating state-of-the-art imputation methods within the explanation process. Since our proposal returns explanations in the form of feature importance, the user will be aware also of the importance of a missing value in a given record for a particular prediction. Extensive experiments show the effectiveness of the proposed method with respect to some baseline solutions relying on traditional data imputation.

6.

[SGM2023]Francesco Spinnato, Riccardo Guidotti, Anna Monreale, Mirco Nanni, Dino Pedreschi, Fosca Giannotti (2023) - ACM Transactions on Knowledge Discovery from Data

Abstract

The growing availability of time series data has increased the usage of classifiers for this data type. Unfortunately, state-of-the-art time series classifiers are black-box models and, therefore, not usable in critical domains such as healthcare or finance, where explainability can be a crucial requirement. This paper presents a framework to explain the predictions of any black-box classifier for univariate and multivariate time series. The provided explanation is composed of three parts. First, a saliency map highlighting the most important parts of the time series for the classification. Second, an instance-based explanation exemplifies the black-box’s decision by providing a set of prototypical and counterfactual time series. Third, a factual and counterfactual rule-based explanation, revealing the reasons for the classification through logical conditions based on subsequences that must, or must not, be contained in the time series. Experiments and benchmarks show that the proposed method provides faithful, meaningful, stable, and interpretable explanations.

8.

[BGG2023]Francesco Bodria, Fosca Giannotti, Riccardo Guidotti, Francesca Naretto, Dino Pedreschi, Salvatore Rinzivillo (2023) - Springer Science+Business Media, LLC, part of Springer Nature. In Data Mining and Knowledge Discovery

Abstract

The rise of sophisticated black-box machine learning models in Artificial Intelligence systems has prompted the need for explanation methods that reveal how these models work in an understandable way to users and decision makers. Unsurprisingly, the state-of-the-art exhibits currently a plethora of explainers providing many different types of explanations. With the aim of providing a compass for researchers and practitioners, this paper proposes a categorization of explanation methods from the perspective of the type of explanation they return, also considering the different input data formats. The paper accounts for the most representative explainers to date, also discussing similarities and discrepancies of returned explanations through their visual appearance. A companion website to the paper is provided as a continuous update to new explainers as they appear. Moreover, a subset of the most robust and widely adopted explainers, are benchmarked with respect to a repertoire of quantitative metrics.

9.

[MBG2023]Carlo Metta, Andrea Beretta, Riccardo Guidotti, Yuan Yin, Patrick Gallinari, Salvatore Rinzivillo, Fosca Giannotti (2023) - Springer Nature. In International Journal of Data Science and Analytics

Abstract

A key issue in critical contexts such as medical diagnosis is the interpretability of the deep learning models adopted in decision-making systems. Research in eXplainable Artificial Intelligence (XAI) is trying to solve this issue. However, often XAI approaches are only tested on generalist classifier and do not represent realistic problems such as those of medical diagnosis. In this paper, we aim at improving the trust and confidence of users towards automatic AI decision systems in the field of medical skin lesion diagnosis by customizing an existing XAI approach for explaining an AI model able to recognize different types of skin lesions. The explanation is generated through the use of synthetic exemplar and counter-exemplar images of skin lesions and our contribution offers the practitioner a way to highlight the crucial traits responsible for the classification decision. A validation survey with domain experts, beginners, and unskilled people shows that the use of explanations improves trust and confidence in the automatic decision system. Also, an analysis of the latent space adopted by the explainer unveils that some of the most frequent skin lesion classes are distinctly separated. This phenomenon may stem from the intrinsic characteristics of each class and may help resolve common misclassifications made by human experts.

15.

[BGG2023c]Francesco Bodria, Riccardo Guidotti, Fosca Giannotti & Dino Pedreschi (2022) - Proceedings of the 25th international conference on Discovery Science (DS), 2022, Montpellier. In Lecture Notes in Computer Science()

Abstract

Many dimensionality reduction methods have been introduced to map a data space into one with fewer features and enhance machine learning models’ capabilities. This reduced space, called latent space, holds properties that allow researchers to understand the data better and produce better models. This work proposes an interpretable latent space that preserves the similarity of data points and supports a new way of learning a classification model that allows prediction and explanation through counterfactual examples. We demonstrate with extensive experiments the effectiveness of the latent space with respect to different metrics in comparison with several competitors, as well as the quality of the achieved counterfactual explanations.

16.

[BGG2023b]Bodria Francesco, Riccardo Guidotti, Fosca Giannotti, Dino Pedreschi (2022) - Proceedings of Data Science and Advanced Analytics (DSAA), 2022 IEEE 9th International Conference. In Proceedings of the 9th IEEE International Conference on Data Science and Advanced, Analytics (DSAA)

Abstract

Artificial Intelligence decision-making systems have dramatically increased their predictive performance in recent years, beating humans in many different specific tasks. However, with increased performance has come an increase in the complexity of the black-box models adopted by the AI systems, making them entirely obscure for the decision process adopted. Explainable AI is a field that seeks to make AI decisions more transparent by producing explanations. In this paper, we propose T-LACE, an approach able to retrieve post-hoc counterfactual explanations for a given pre-trained black-box model. T-LACE exploits the similarity and linearity proprieties of a custom-created transparent latent space to build reliable counterfactual explanations. We tested T-LACE on several tabular datasets and provided qualitative evaluations of the generated explanations in terms of similarity, robustness, and diversity. Comparative analysis against various state-of-the-art counterfactual explanation methods shows the higher effectiveness of our approach.

17.

[NMG2022]Naretto Francesca, Monreale Anna, Giannotti Fosca (2022) - 2022 IEEE 4th International Conference on Cognitive Machine Intelligence (CogMI). In IEEE International Conference on Cognitive Machine Intelligence (CogMI)

Abstract

In recent years we are witnessing the diffusion of AI systems based on powerful Machine Learning models which find application in many critical contexts such as medicine, financial market and credit scoring. In such a context it is particularly important to design Trustworthy AI systems while guaranteeing transparency, with respect to their decision reasoning and privacy protection. Although many works in the literature addressed the lack of transparency and the risk of privacy exposure of Machine Learning models, the privacy risks of explainers have not been appropriately studied. This paper presents a methodology for evaluating the privacy exposure raised by interpretable global explainers able to imitate the original black-box classifier. Our methodology exploits the well-known Membership Inference Attack. The experimental results highlight that global explainers based on interpretable trees lead to an increase in privacy exposure.

20.

[NMG2022]Francesca Naretto, Anna Monreale, Fosca Giannotti (2022) - Proceedings of the First International Conference on Hybrid Human-Artificial Intelligence. In Frontiers in Artificial Intelligence and Applications

Abstract

nan

Research Line 5

26.

[BRF2022]

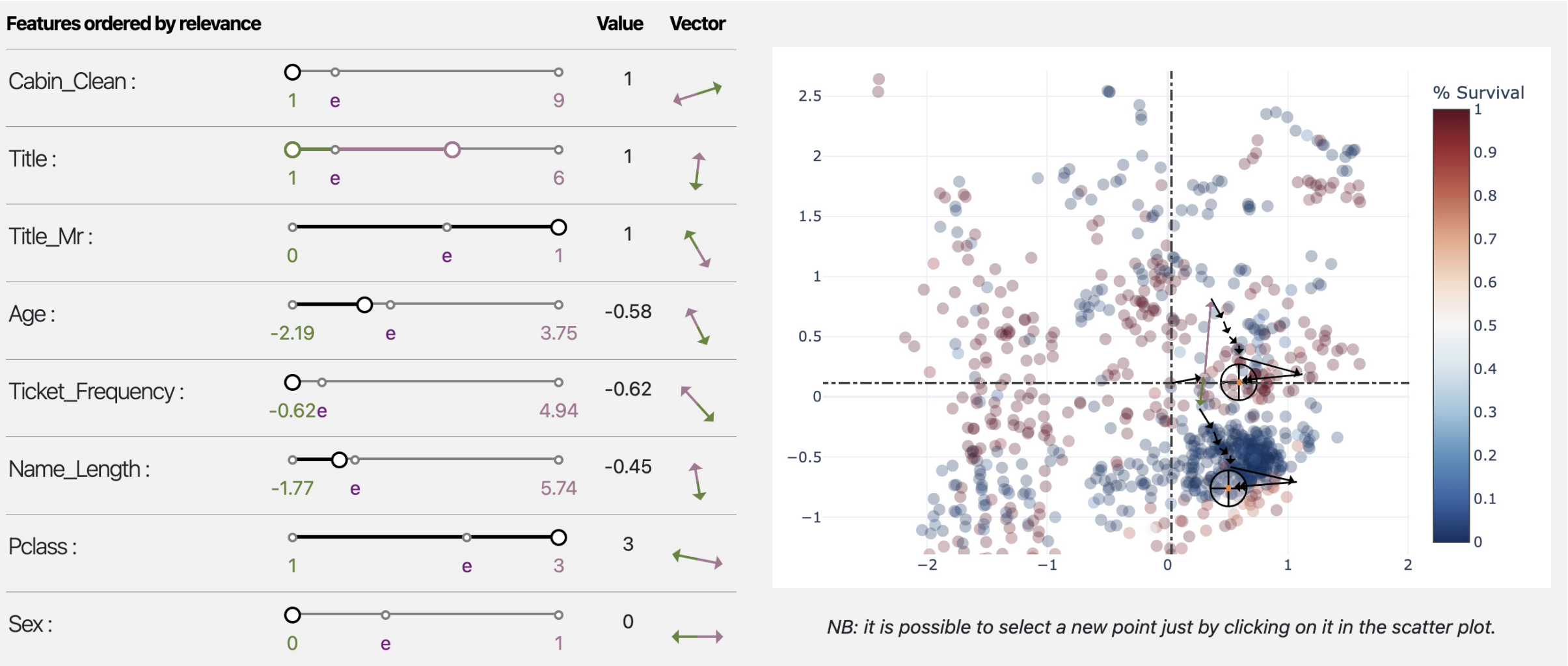

Bodria Francesco, Rinzivillo Salvatore, Fadda Daniele, Guidotti Riccardo, Fosca Giannotti, Pedreschi Dino (2022) - EUROVIS 2022. In Proceedings of the 2022 Conference Eurovis 2022

Abstract

Autoencoders are a powerful yet opaque feature reduction technique, on top of which we propose a novel way for the joint visual exploration of both latent and real space. By interactively exploiting the mapping between latent and real features, it is possible to unveil the meaning of latent features while providing deeper insight into the original variables. To achieve this goal, we exploit and re-adapt existing approaches from eXplainable Artificial Intelligence (XAI) to understand the relationships between the input and latent features. The uncovered relationships between input features and latent ones allow the user to understand the data structure concerning external variables such as the predictions of a classification model. We developed an interactive framework that visually explores the latent space and allows the user to understand the relationships of the input features with model prediction.

27.

[PBF2022]Panigutti Cecilia, Beretta Andrea, Fadda Daniele , Giannotti Fosca, Pedreschi Dino, Perotti Alan, Rinzivillo Salvatore (2022). In ACM Transactions on Interactive Intelligent Systems

Abstract

eXplainable AI (XAI) involves two intertwined but separate challenges: the development of techniques to extract explanations from black-box AI models, and the way such explanations are presented to users, i.e., the explanation user interface. Despite its importance, the second aspect has received limited attention so far in the literature. Effective AI explanation interfaces are fundamental for allowing human decision-makers to take advantage and oversee high-risk AI systems effectively. Following an iterative design approach, we present the first cycle of prototyping-testing-redesigning of an explainable AI technique, and its explanation user interface for clinical Decision Support Systems (DSS). We first present an XAI technique that meets the technical requirements of the healthcare domain: sequential, ontology-linked patient data, and multi-label classification tasks. We demonstrate its applicability to explain a clinical DSS, and we design a first prototype of an explanation user interface. Next, we test such a prototype with healthcare providers and collect their feedback, with a two-fold outcome: first, we obtain evidence that explanations increase users' trust in the XAI system, and second, we obtain useful insights on the perceived deficiencies of their interaction with the system, so that we can re-design a better, more human-centered explanation interface.

Research Line 1▪3▪4

28.

[PBP2022]Panigutti Cecilia, Beretta Andrea, Pedreschi Dino, Giannotti Fosca (2022) - 2022 Conference on Human Factors in Computing Systems. In Proceedings of the 2022 Conference on Human Factors in Computing Systems

Abstract

The field of eXplainable Artificial Intelligence (XAI) focuses on providing explanations for AI systems' decisions. XAI applications to AI-based Clinical Decision Support Systems (DSS) should increase trust in the DSS by allowing clinicians to investigate the reasons behind its suggestions. In this paper, we present the results of a user study on the impact of advice from a clinical DSS on healthcare providers' judgment in two different cases: the case where the clinical DSS explains its suggestion and the case it does not. We examined the weight of advice, the behavioral intention to use the system, and the perceptions with quantitative and qualitative measures. Our results indicate a more significant impact of advice when an explanation for the DSS decision is provided. Additionally, through the open-ended questions, we provide some insights on how to improve the explanations in the diagnosis forecasts for healthcare assistants, nurses, and doctors.

Research Line 4

30.

[VMG2022]

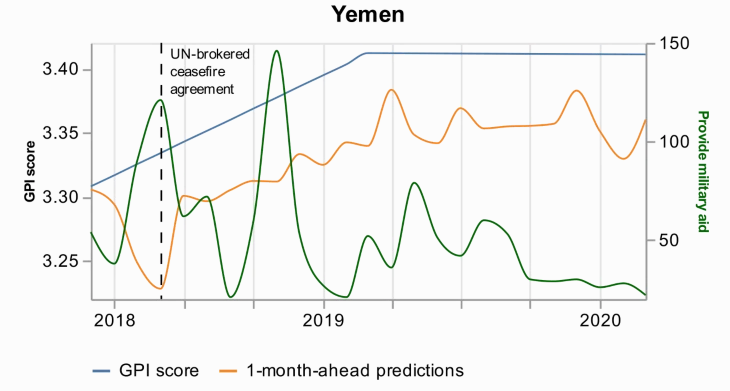

Voukelatou Vasiliki, Miliou Ioanna, Giannotti Fosca, Pappalardo Luca (2022) - EPJ Data Science. In EPJ Data Science

Abstract

Peace is a principal dimension of well-being and is the way out of inequity and violence. Thus, its measurement has drawn the attention of researchers, policymakers, and peacekeepers. During the last years, novel digital data streams have drastically changed the research in this field. The current study exploits information extracted from a new digital database called Global Data on Events, Location, and Tone (GDELT) to capture peace through the Global Peace Index (GPI). Applying predictive machine learning models, we demonstrate that news media attention from GDELT can be used as a proxy for measuring GPI at a monthly level. Additionally, we use explainable AI techniques to obtain the most important variables that drive the predictions. This analysis highlights each country’s profile and provides explanations for the predictions, and particularly for the errors and the events that drive these errors. We believe that digital data exploited by researchers, policymakers, and peacekeepers, with data science tools as powerful as machine learning, could contribute to maximizing the societal benefits and minimizing the risks to peace.

31.

[CDF2021]Chatila Raja, Dignum Virginia, Fisher Michael, Giannotti Fosca, Morik Katharina, Russell Stuart, Yeung Karen (2022) - Reflections on Artificial Intelligence for Humanity. In Lecture Notes in Computer Science,

Abstract

Modern AI systems have become of widespread use in almost all sectors with a strong impact on our society. However, the very methods on which they rely, based on Machine Learning techniques for processing data to predict outcomes and to make decisions, are opaque, prone to bias and may produce wrong answers. Objective functions optimized in learning systems are not guaranteed to align with the values that motivated their definition. Properties such as transparency, verifiability, explainability, security, technical robustness and safety, are key to build operational governance frameworks, so that to make AI systems justifiably trustworthy and to align their development and use with human rights and values.

35.

[GMP2021]

Guidotti Riccardo, Monreale Anna, Pedreschi Dino, Giannotti Fosca (2021) - Explainable AI Within the Digital Transformation and Cyber Physical Systems (pp. 9-31)

Abstract

This book presents Explainable Artificial Intelligence (XAI), which aims at producing explainable models that enable human users to understand and appropriately trust the obtained results. The authors discuss the challenges involved in making machine learning-based AI explainable. Firstly, that the explanations must be adapted to different stakeholders (end-users, policy makers, industries, utilities etc.) with different levels of technical knowledge (managers, engineers, technicians, etc.) in different application domains. Secondly, that it is important to develop an evaluation framework and standards in order to measure the effectiveness of the provided explanations at the human and the technical levels. This book gathers research contributions aiming at the development and/or the use of XAI techniques in order to address the aforementioned challenges in different applications such as healthcare, finance, cybersecurity, and document summarization. It allows highlighting the benefits and requirements of using explainable models in different application domains in order to provide guidance to readers to select the most adapted models to their specified problem and conditions. Includes recent developments of the use of Explainable Artificial Intelligence (XAI) in order to address the challenges of digital transition and cyber-physical systems; Provides a textual scientific description of the use of XAI in order to address the challenges of digital transition and cyber-physical systems; Presents examples and case studies in order to increase transparency and understanding of the methodological concepts.

41.

[MBG2021]Metta Carlo, Beretta Andrea, Guidotti Riccardo, Yin Yuan, Gallinari Patrick, Rinzivillo Salvatore, Giannotti Fosca (2021) - Arxive preprint. In International Journal of Data Science and Analytics

Abstract

A key issue in critical contexts such as medical diagnosis is the interpretability of the deep learning models adopted in decision-making systems. Research in eXplainable Artificial Intelligence (XAI) is trying to solve this issue. However, often XAI approaches are only tested on generalist classifier and do not represent realistic problems such as those of medical diagnosis. In this paper, we analyze a case study on skin lesion images where we customize an existing XAI approach for explaining a deep learning model able to recognize different types of skin lesions. The explanation is formed by synthetic exemplar and counter-exemplar images of skin lesion and offers the practitioner a way to highlight the crucial traits responsible for the classification decision. A survey conducted with domain experts, beginners and unskilled people proof that the usage of explanations increases the trust and confidence in the automatic decision system. Also, an analysis of the latent space adopted by the explainer unveils that some of the most frequent skin lesion classes are distinctly separated. This phenomenon could derive from the intrinsic characteristics of each class and, hopefully, can provide support in the resolution of the most frequent misclassifications by human experts.

46.

[NPN2020]Naretto Francesca, Pellungrini Roberto, Nardini Franco Maria, Giannotti Fosca (2021) - ECML PKDD 2020 Workshops. In ECML PKDD 2020 Workshops

Abstract

The analysis of privacy risk for mobility data is a fundamental part of any privacy-aware process based on such data. Mobility data are highly sensitive. Therefore, the correct identification of the privacy risk before releasing the data to the public is of utmost importance. However, existing privacy risk assessment frameworks have high computational complexity. To tackle these issues, some recent work proposed a solution based on classification approaches to predict privacy risk using mobility features extracted from the data. In this paper, we propose an improvement of this approach by applying long short-term memory (LSTM) neural networks to predict the privacy risk directly from original mobility data. We empirically evaluate privacy risk on real data by applying our LSTM-based approach. Results show that our proposed method based on a LSTM network is effective in predicting the privacy risk with results in terms of F1 of up to 0.91. Moreover, to explain the predictions of our model, we employ a state-of-the-art explanation algorithm, Shap. We explore the resulting explanation, showing how it is possible to provide effective predictions while explaining them to the end-user.

51.

[PGG2019]Pedreschi Dino, Giannotti Fosca, Guidotti Riccardo, Monreale Anna, Ruggieri Salvatore, Turini Franco (2021) - Proceedings of the AAAI Conference on Artificial Intelligence. In Proceedings of the AAAI Conference on Artificial Intelligence

Abstract

Black box AI systems for automated decision making, often based on machine learning over (big) data, map a user’s features into a class or a score without exposing the reasons why. This is problematic not only for lack of transparency, but also for possible biases inherited by the algorithms from human prejudices and collection artifacts hidden in the training data, which may lead to unfair or wrong decisions. We focus on the urgent open challenge of how to construct meaningful explanations of opaque AI/ML systems, introducing the local-toglobal framework for black box explanation, articulated along three lines: (i) the language for expressing explanations in terms of logic rules, with statistical and causal interpretation; (ii) the inference of local explanations for revealing the decision rationale for a specific case, by auditing the black box in the vicinity of the target instance; (iii), the bottom-up generalization of many local explanations into simple global ones, with algorithms that optimize for quality and comprehensibility. We argue that the local-first approach opens the door to a wide variety of alternative solutions along different dimensions: a variety of data sources (relational, text, images, etc.), a variety of learning problems (multi-label classification, regression, scoring, ranking), a variety of languages for expressing meaningful explanations, a variety of means to audit a black box.

56.

[GMS2020]

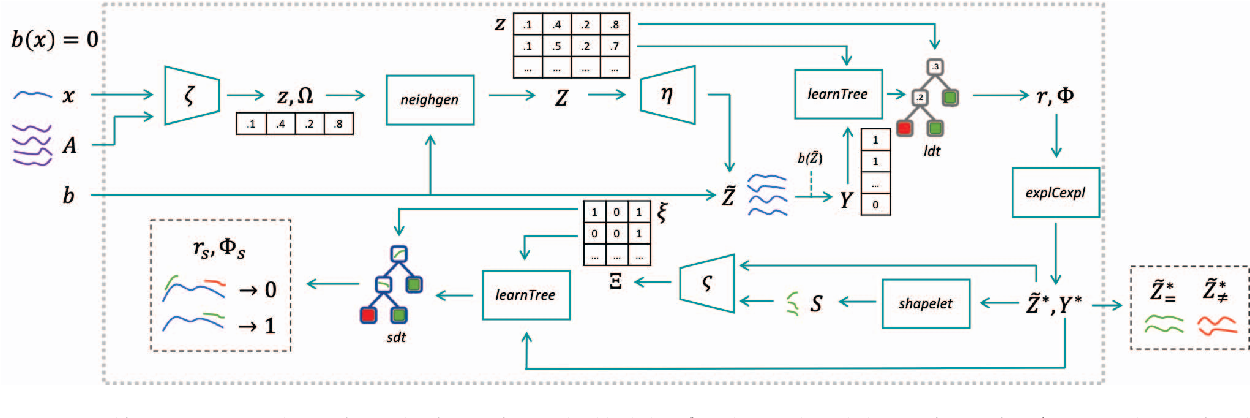

Guidotti Riccardo, Monreale Anna, Spinnato Francesco, Pedreschi Dino, Giannotti Fosca (2020) - 2020 IEEE Second International Conference on Cognitive Machine Intelligence (CogMI)

Abstract

We present a method to explain the decisions of black box models for time series classification. The explanation consists of factual and counterfactual shapelet-based rules revealing the reasons for the classification, and of a set of exemplars and counter-exemplars highlighting similarities and differences with the time series under analysis. The proposed method first generates exemplar and counter-exemplar time series in the latent feature space and learns a local latent decision tree classifier. Then, it selects and decodes those respecting the decision rules explaining the decision. Finally, it learns on them a shapelet-tree that reveals the parts of the time series that must, and must not, be contained for getting the returned outcome from the black box. A wide experimentation shows that the proposed method provides faithful, meaningful and interpretable explanations.

58.

[RGG2020]Ruggieri Salvatore, Giannotti Fosca, Guidotti Riccardo, Monreale Anna, Pedreschi Dino, Turini Franco (2020). In ANNUARIO DI DIRITTO COMPARATO E DI STUDI LEGISLATIVI

Abstract

The pervasive adoption of Artificial Intelligence (AI) models in the modern information society, requires counterbalancing the growing decision power demanded to AI models with risk assessment methodologies. In this paper, we consider the risk of discriminatory decisions and review approaches for discovering discrimination and for designing fair AI models. We highlight the tight relations between discrimination discovery and explainable AI, with the latter being a more general approach for understanding the behavior of black boxes.

62.

[GPP2019]

Giannotti Fosca, Pedreschi Dino, Panigutti Cecilia (2019) - Biopolitica, Pandemia e democrazia. Rule of law nella società digitale. In BIOPOLITICA, PANDEMIA E DEMOCRAZIA Rule of law nella società digitale

Abstract

La crisi sanitaria ha trasformato le relazioni tra Stato e cittadini, conducendo a limitazioni temporanee dei diritti fondamentali e facendo emergere conflitti tra le due dimensioni della salute, come diritto della persona e come diritto della comunità, e tra il diritto alla salute e le esigenze del sistema economico. Per far fronte all’emergenza, si è modificato il tradizionale equilibrio tra i poteri dello Stato, in una prospettiva in cui il tempo dell’emergenza sembra proiettarsi ancora a lungo sul futuro. La pandemia ha inoltre potenziato la centralità del digitale, dall’utilizzo di software di intelligenza artificiale per il tracciamento del contagio alla nuova connettività del lavoro remoto, passando per la telemedicina. Le nuove tecnologie svolgono un ruolo di prevenzione e controllo, ma pongono anche delicate questioni costituzionali: come tutelare la privacy individuale di fronte al Panopticon digitale? Come inquadrare lo statuto delle piattaforme digitali, veri e propri poteri tecnologici privati, all’interno dei nostri ordinamenti? La ricerca presentata in questo volume e nei due volumi collegati propone le riflessioni su questi temi di studiosi afferenti a una moltitudine di aree disciplinari: medici, giuristi, ingegneri, esperti di robotica e di IA analizzano gli effetti dell’emergenza sanitaria sulla tenuta del modello democratico occidentale, con l’obiettivo di aprire una riflessione sulle linee guida per la ricostruzione del Paese, oltre la pandemia. In particolare, questo terzo volume affronta gli aspetti legati all’impatto della tecnologia digitale e dell’IA sui processi, sulla scuola e sulla medicina, con una riflessione su temi quali l’organizzazione della giustizia, le responsabilità, le carenze organizzative degli enti.

64.

[PGG2018]Pedreschi Dino, Giannotti Fosca, Guidotti Riccardo, Monreale Anna , Pappalardo Luca , Ruggieri Salvatore , Turini Franco (2018) - Arxive preprint

Abstract

Black box systems for automated decision making, often based on machine learning over (big) data, map a user's features into a class or a score without exposing the reasons why. This is problematic not only for lack of transparency, but also for possible biases hidden in the algorithms, due to human prejudices and collection artifacts hidden in the training data, which may lead to unfair or wrong decisions. We introduce the local-to-global framework for black box explanation, a novel approach with promising early results, which paves the road for a wide spectrum of future developments along three dimensions: (i) the language for expressing explanations in terms of highly expressive logic-based rules, with a statistical and causal interpretation; (ii) the inference of local explanations aimed at revealing the logic of the decision adopted for a specific instance by querying and auditing the black box in the vicinity of the target instance; (iii), the bottom-up generalization of the many local explanations into simple global ones, with algorithms that optimize the quality and comprehensibility of explanations.